New Code Suggests Google Assistant Coming to Chrome OS Devices

XDA-Developers is reporting that new code in an upcoming version of Chrome OS has references to Google Assistant. Chrome OS is used in a number of devices, mostly desktop and laptop computers. Adding Google Assistant to these devices will likely put it on par with Cortana access in Microsoft Windows devices and Siri capabilities in Apple OSX. XDA reports:

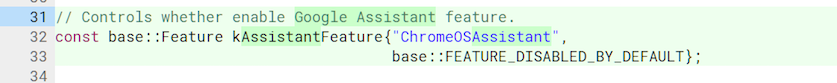

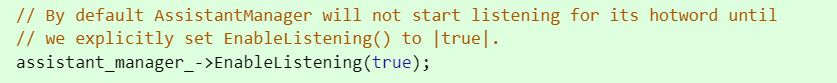

“As we were digging through the Chromium Gerrit, a merged commit caught our eye. The text description for the commit reads, ‘add Assistant feature flags changes accordingly’ and, ‘Assistant service provides core Google Assistant functionality’.

“It seems to lay the groundwork for native Google Assistant integration on Chrome OS devices, which isn’t too surprising — in June 2017, a commit in the Gerrit specifically mentioned ‘[adding] Google Assistant settings to the settings UI’ and ‘[launching] an Intent when the Google Assistant Settings is tapped.’”

Image Credit: XDA-Developers

Voice Assistants Everywhere – Particularly Where We Compute

The importance here is not that Google is bringing Assistant to Chrome OS devices. That is overdue. The larger significance is that voice assistants initially were thought of as tools to help on mobile devices when hands free operation was necessary or as a faster input mechanism than typing with thumbs on small screens. That perception extended to convenience in hands free operation of smart speakers where there was virtually no mechanical interaction option. Computers are different. All of the manual controls we need are right there. We are accustomed to using type and touch to input information and receive information visually. Why voice in this scenario? Three reasons:

- Input speed

- Conversational flexibility

- Habituation

Speed, Flexibility and Habits

Most people can type between 40-60 words per minute but speak at a rate of 150 words per minute. Voice can be far more efficient than typing input. And, you don’t have to open up a specific application or place your cursor in the search box. You just speak. As a result, getting to the point of input is also faster. It may not seem like much time, but it is the type of shortcut that humans seek out both consciously and unconsciously.

Most people can type between 40-60 words per minute but speak at a rate of 150 words per minute. Voice can be far more efficient than typing input. And, you don’t have to open up a specific application or place your cursor in the search box. You just speak. As a result, getting to the point of input is also faster. It may not seem like much time, but it is the type of shortcut that humans seek out both consciously and unconsciously.

Second, conversational queries don’t require the tortured syntax we use today to get computers to do things for us. We can ask for what we want the way we normally communicate instead of learning a new syntax. And, we are not constrained by what is on the screen. Even if there is no button to press, we can make a request and potentially receive the answer or outcome we seek.

Finally, using voice with smart speakers and on mobile devices is starting to form habits among consumers. Those habits are bound to migrate to other computing tasks even where we have more robust input mechanisms. Once you know you can touch your phone to perform tasks, you naturally want to do that on your laptop or television. When you become accustomed to speaking to computers, you come to expect it. As voice becomes a pervasive interface, devices need to accommodate user expectations.

PCs are Just Another Surface to be Accommodated

Microsoft claims over 140 million monthly active Cortana users. Most of those are undoubtedly on PCs. Amazon made a point at CES to launch a Windows app that brings Alexa to the desktop. Google needs to keep up and you can easily imagine that Chrome OS devices are only a first step. Could Google be kept out of desktops run on Microsoft and Apple OS? The Chrome browser is an obvious point of entry, but computers represent the surface where Google has the least leverage in expanding Google Assistant access.

More Alexa Windows 10 App Details Revealed. Why Its Important for Amazon.

Google Assistant App Total Reaches Nearly 2400. But That’s Not the Real Number. It’s really 1719.