Siri, Google Assistant and Alexa Hacked with High Frequency Dolphin Attack

Researchers out of China have demonstrated a security flaw that is apparently common across voice assistants. FastCompany reports that Chinese researcher have shown that translating voice commands into ultrasonic frequencies inaudible to humans are easily recognized by voice assistants. This means, “Hey Siri,” “Ok, Google,” or “Alexa,” could all be uttered by a machine and wake up your iPhone, Google Home or Echo respectively. Subsequent inaudible commands could be issued to the activated voice assistants and execute tasks such as making a phone call, visiting a website or controlling smart home devices.

The video below demonstrates what is called a DolphinAttack. An iPhone is first shown to make a call using audible voice commands issued to Siri. Then the iPhone is shown to be locked and an inaudible voice command in an ultrasonic range is issued to Siri which then recognizes the command and again makes a call. This would be without the knowledge of device owner if they weren’t looking at the screen.

What This Means for Voice Assistant Security

The successful DolphinAttack raises several concerns unlike the previous demonstration hack on the Amazon Echo. That attack could only be achieved with physical access to the device so really was an issue of physical security and was only effective on older devices. The DophinAttack works on current devices and across a range of voice assistants. However, there are practical limitations.

Distance, Noise, Command Type and Device Use are Mitigating Factors

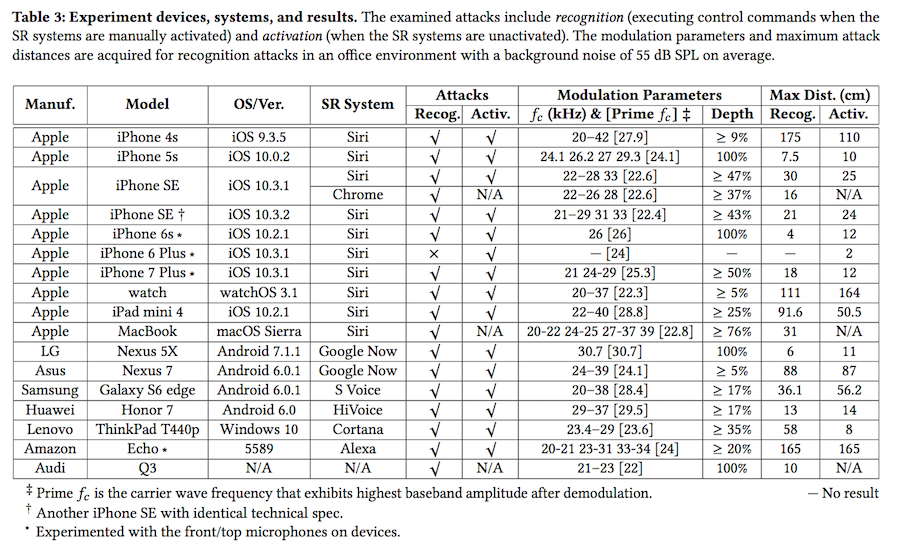

The report differentiated between activation and recognition. Activation was whether the wake word or phrase made the voice assistant ready for a command. Recognition was whether it could interpret a command and execute a task after being activated. Both activation and recognition are required for an attack to be successful. These two factors were impacted significantly by distance and background noise.

Effective range for attacks in terms of distance was between 2 cm (~0.8 inches) and 175 cm (~5 feet). Amazon Echo was the most susceptible to long-range attacks which was expected given the engineering behind its far-field microphones. Interestingly, older iPhone models like the 4s also had substantial far-field recognition capabilities nearly rivaling Amazon Echo. By contrast, newer iPhone models like the 6s and 7 were only accessible in the near field of 12 cm or less and the 6s Plus would not recognize the commands. The attacking device must be in near proximity to your device for this to have a chance to work.

Background noise also played a big factor. A quiet office setting yielded consistent attack success rates while the louder “Cafe” and “Street” settings became harder with 80% and 30% success rates respectively. The type of commands also matter. “Open” and “call” were harder to successfully execute than “Turn on airplane mode,” and “how is the weather.” That was impacted by the requirement for the recognition to have near perfect accuracy for web addresses or phone numbers to be successful. Finally, this only works surreptitiously if the device owner is not currently using the device and it is not visible. Otherwise, the attack would become noticed by the device owner.

Preventing DophinAttacks on Voice Assistants

The report goes on to suggest manufacturers make some hardware alterations to address this vulnerability.

The root cause of inaudible voice commands is that microphones can sense acoustic sounds with a frequency higher than 20 kHz while an ideal microphone should not…Thus, a microphone shall be enhanced and designed to suppress any acoustic signals whose frequencies are in the ultrasound range. For instance, the microphone of iPhone 6 Plus can resist to inaudible voice commands well.

There is also a software based solution that the research called out using Google Assistant as an example.

The original signal is produced by the Google TTS engine, the carrier frequency for modulation is 25 kHz. Thus, we can detect DolphinAttack by analyzing the signal in the frequency range from 500 to 1000 Hz. In particular, a machine learning based classifier shall detect it.

This could also be addressed by voice fingerprinting technology similar to what Google Home uses today to differentiate users. That would require an attacker to have a voice recording of the device owners voice to carry out an exploit.

What is Means for Users Today

From a practical standpoint, few users should fear a DolphinAttack today. The attacker needs to be in near proximity, know the device you are using and be in an environment with little background noise. However, the researchers have performed a great service in bringing this issue to light now and offering practical suggestions for manufacturers to patch this vulnerability. As voice assistants become more commonly used they will draw attention from cyber attackers. That means there will be many more potential exploits that will require patches. Welcome to the world of software.