Mycroft is Growing Fast and Building an Agent so People Won’t Be Dependent on Silicon Valley Giants

Mycroft describes itself as the first open source voice assistant. I recently had the chance to sit down and have a lengthy conversation with company co-founder and CEO, Joshua Montgomery. This is the first part of the interview and has been edited for readability. Voicebot first spoke with Josh in December 2016 and a lot has happened since then.

Mycroft describes itself as the first open source voice assistant. I recently had the chance to sit down and have a lengthy conversation with company co-founder and CEO, Joshua Montgomery. This is the first part of the interview and has been edited for readability. Voicebot first spoke with Josh in December 2016 and a lot has happened since then.

Josh, it’s been over six months since we last spoke. Can you please update Voicebot readers on where you were and where you are now?

Six months ago we were in talks with Jaguar / Land Rover. We were also pre-500 Startups so that hadn’t happened yet and the software was still, and is still in early stages. I think we’ve made a lot of progress in the last six months.

We have had a team at Jaguar / Land Rover since January where they’ve been working directly with Jaguar’s engineers on a number of different applications. We’ve been integrating into the Jaguar F type sports car. We see a clear path forward to become the voice platform for Jaguar. I’m not saying we will, but we see a clear path for it. We were also invited to join 500 Startups and are working to really accelerate growth and accelerate awareness in open source community and the general business community. There have been a lot of press mentions … and that is feeding into our overall strategy which has been to position ourselves as the open alternative to Siri, etcetera.

Can you expand on Mycroft’s Value Proposition?

Silicon Valley is building great technology: Alexa, Siri, Cortana, Google Assistant. At the same time those companies are also having all the data sent to them. There is this entire community both in the enterprise world and in individuals who are looking to use technologies that don’t send their data to Amazon, Google and Facebook. What we are trying to do is build a voice assistant for everybody who is not [one of the] five major Silicon Valley giants. Our approach to the problem is a little unique in that we are building an entire voice stack—automated speech recognition, natural language processing and speech synthesis engine. We are building tactics and techniques to take the incoming data the system is generating and making it public so the community train out on the underlying technology.

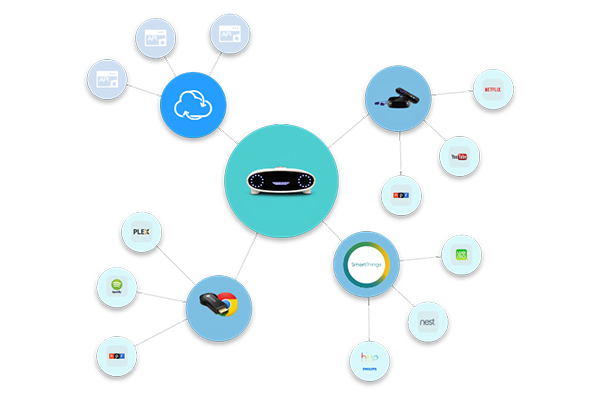

We are unique in being extremely open about everything. Our little speaker, the reference device, is a certified open hardware device. The schematics are online. The CAD files are online. All of the software is open. Under the concept is that everyone should have access to this type of technology.

Sounds like you see training data is a community resource. How are you thinking about this right now?

We do have a very active community. We have 1,175 members in the community right now and around 6,000 users. So, we are getting a lot of data for people to use. The technology is too important to the future to keep people from it. As a result, we need to democratize it. Machine learning is kind of a unique application of software because you could build the best algorithm out there, but unless you have data to train the algorithm, it doesn’t matter. The data is really a key component.

If we are going to democratize this, we need to make data not only available for us because we have limited resources for development—26 full time staff this summer, of which 10 are interns. So, we have 16 full time staff. We can’t do everything. The whole thesis behind open source software is that you have this great community of developers out there contributing to the project and have access to the data set really help move everything forward. In exchange for the contributions they are making, the company makes a commitment that they can use the resulting technology however they choose.

This makes sense. You have data and so do the big technology platforms. What makes you different then?

One of the things no one is talking about … is how ubiquitous these interfaces are becoming. Given that you’ve go consolidation going on in this space, I’d say there are probably six companies, maybe seven, have a realistic chance in the space. If you are going to interact with an agent all day, that agent is going to be responsible for screening your phone calls, or accessing your email and eliminating spam. It’s going to be responsible for scheduling meetings and it’s going to be helping you buy things. It’s going to be responsible for all of these various activities that you do all day. Who do you want that agent to represent?

That path that we are going on today with the Silicon Valley majors leading the way, the agent that you interact with all day long will be the one that represents that company. So, let’s say you are an Apple user. You love your Apple computer and Apple Watch. You’ve got an iPhone. You’ve got Apple’s HomePod. You will interact with Siri all day and Siri will represent Apple’s interests. So, if you say, “I want to buy a song. I want to listen to Dre’s new album.” It’s going to sell you that through Apple’s iTunes store. And, maybe not sell it to you through Google that may have a better price or Amazon that may have a better price or not stream it through Spotify. It’s going to make the decision based on Apple’s incentives.

Music is one small piece of that. But think about it in the context of allowing interruptions. Your agent acts as the screen on your phone. The agents will become smart enough to know whether it should interrupt you. My question is, do you want it to interrupt you when a sales person pays the company that owns your agent to allow it to interrupt and you get a sales call? Or, do you want it to interrupt you when it’s your Mom and she is saying your Dad is sick? It’s the concept of agency which is really big in a lot of open source communities. We view the agent we are building as an agent for the user. Today, that might not be hugely important as it will be in 10 years. However, if we don’t build an open stack today all of these agents will represent some corporate entity. And that’s a huge problem. We are the open source opportunity to fix that problem.