RE-WORK Virtual Assistant Summit Round-up: Pixar, Inbenta, Pullstring and More

RE-WORK’s Virtual Assistant Summit is underway this week in San Francisco. The key topics for Day 1 were Advancements in Deep Learning and Speech Recognition, Building Virtual Assistants (VA), Personalization and Characterization of VAs and the Future of VAs. Enthusiasm best describes the sentiment of the presentations. The advances in deep learning and the rapid adoption of virtual assistants such as Amazon Alexa and Google Home by consumers is creating a lot of activity and fueling innovation.

Speech Recognition and NLP

Almost every speaker touched on speech recognition in some way. Rachael Tatman, a PhD candidate from the University of Washington led off with some insights on her research into automatic speech recognition and linguistic variation. One of her interesting findings: a plurality of U.S. residents lives in the southern United State, but there is a higher failure rate from voice assistants for U.S. southern dialect or accent. That would seem like an obvious area for the voice recognition platforms to target improvement.

Almost every speaker touched on speech recognition in some way. Rachael Tatman, a PhD candidate from the University of Washington led off with some insights on her research into automatic speech recognition and linguistic variation. One of her interesting findings: a plurality of U.S. residents lives in the southern United State, but there is a higher failure rate from voice assistants for U.S. southern dialect or accent. That would seem like an obvious area for the voice recognition platforms to target improvement.

Stephen Scarr, CEO of eContext, discussed his company’s eight-year journey to build the world’s largest taxonomy of consumer product categories so online merchants could better leverage bots in eCommece. By using the hierarchical taxonomy that categorizes products by type with increasing levels of specificity, the expectation is that bots will be able to better understand customer requests and match them to available retail inventory. The company’s BotBuilder is currently accepting participants into its beta program.

Stephen Scarr, CEO of eContext, discussed his company’s eight-year journey to build the world’s largest taxonomy of consumer product categories so online merchants could better leverage bots in eCommece. By using the hierarchical taxonomy that categorizes products by type with increasing levels of specificity, the expectation is that bots will be able to better understand customer requests and match them to available retail inventory. The company’s BotBuilder is currently accepting participants into its beta program.

Although not voice-related, Anjuli Kannan from Google also outlined some findings from the Gmail Smart Reply solution that suggests responses for users so they can click a button to reply to a message instead of typing it out. Ms. Kannan repeated a Google statistic from early 2016 that 10% of messages on mobile Inbox by Gmail users were employing Smart Reply. More interesting is the sequence that the team has had to follow to build and improve the system. It looks at each word individually from the initial message and then builds potential responses a single word at a time to generate language, create a grammatical match between call and response, and then a match style and tone.

Building Virtual Assistants

Justin Woo, the Developer Evangelist at Jibo, talked about the path to building a social robot first envisioned in MIT’s media lab and then creating an SDK so developers could customize it for their own applications. Foreshadowing some design principles echoed later by Alonso Martinez of Pixar, Mr. Woo talked about the importance of the robot’s eye that can be used for expression and to display engagement with a user, the smooth, rounded body design and the three rotational axes that enable movement. The non-verbal communication capabilities really shine in Jibo.

Justin Woo, the Developer Evangelist at Jibo, talked about the path to building a social robot first envisioned in MIT’s media lab and then creating an SDK so developers could customize it for their own applications. Foreshadowing some design principles echoed later by Alonso Martinez of Pixar, Mr. Woo talked about the importance of the robot’s eye that can be used for expression and to display engagement with a user, the smooth, rounded body design and the three rotational axes that enable movement. The non-verbal communication capabilities really shine in Jibo.

Arte Merritt from Dashbot.io discussed how data and analytics are helping developers build better bots. It’s one thing to have a cute robot like Jibo or a Google Home Action in production. It’s another task entirely to understand how your voice application is being received by users and how you can improve performance. Dashbot enables developers to see how conversations are being handled by both voice and chat bots so they can trouble shoot issues and increase engagement and success rates. It also enables bot owners to take over conversations from a bot in real-time when it is struggling. (Read Voicebot’s in-depth interview with Arte Merritt).

Arte Merritt from Dashbot.io discussed how data and analytics are helping developers build better bots. It’s one thing to have a cute robot like Jibo or a Google Home Action in production. It’s another task entirely to understand how your voice application is being received by users and how you can improve performance. Dashbot enables developers to see how conversations are being handled by both voice and chat bots so they can trouble shoot issues and increase engagement and success rates. It also enables bot owners to take over conversations from a bot in real-time when it is struggling. (Read Voicebot’s in-depth interview with Arte Merritt).

Giving Bots Personalities

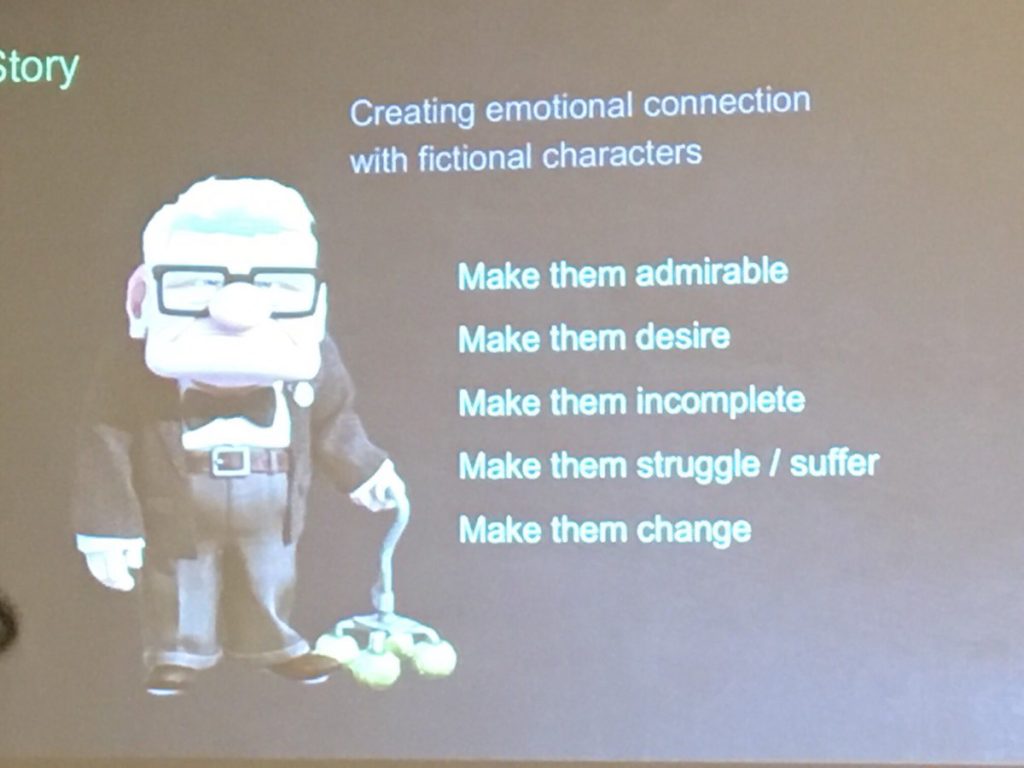

One of the most entertaining presentations came from the entertainment industry. Alonso Martinez, is Technical Director of Pixar Animation Studios and he talked about the building blocks of empathy and making characters that people fall in love with. While Jibo has a lot of personality, most other bots could elicit more user engagement and affinity by intentionally adding specific character traits. He also demonstrated a cute, orb-shaped robot that he had developed himself that communicated by movement, light and sound, without the need for speech. It recognized Mr. Martinez’s speech and reacted appropriately and entertainingly to the interaction, but spoken communication wasn’t part of the character design and it made for a unique interaction model.

One of the most entertaining presentations came from the entertainment industry. Alonso Martinez, is Technical Director of Pixar Animation Studios and he talked about the building blocks of empathy and making characters that people fall in love with. While Jibo has a lot of personality, most other bots could elicit more user engagement and affinity by intentionally adding specific character traits. He also demonstrated a cute, orb-shaped robot that he had developed himself that communicated by movement, light and sound, without the need for speech. It recognized Mr. Martinez’s speech and reacted appropriately and entertainingly to the interaction, but spoken communication wasn’t part of the character design and it made for a unique interaction model.

Martinez suggested developers can learn from the movies when building a backstory for their bots:

- Make them admirable – give them a quality that people appreciate

- Make them desire – show that they want something

- Make them incomplete – enable the user to witness growth in the character

- Make them struggle – let the user see the bot learning and overcoming a challenge

- Make them change – enable the bot through struggle and learning to become better

Martinez pointed out that a user who has witnessed a character or bot that has gone through this transformation will be more emotionally connected to it and more likely to become a loyal user. The emotional switching costs are simply higher. These are fascinating ideas that are definitely hard to put into practice but could have a big payoff even if implemented partially. You could see many of these characteristics on display in Mr. Martinez’s Alonso Robot.

Oren Jacob, CEO of Pullstring and a former CTO at Pixar, talked about these concepts from the perspective of the quality of the conversational interaction. He said, “should we be teaching computer programmers to create conversations as insightful as a Terry Gross interview? Or, should we build tools to help Terry Gross create compelling computer conversations.” [Terry Gross is a famous NPR Host] He went on to suggest that character and conversation in bots is a new model that draws from both the linear arts such as screen writing and stage production along with the interactive arts that we see in entertainment such as video gaming. His thesis is that expertise in the linear and interactive arts is hard to teach to developers so he is trying to make development more accessible to people with an artistic background.

Oren Jacob, CEO of Pullstring and a former CTO at Pixar, talked about these concepts from the perspective of the quality of the conversational interaction. He said, “should we be teaching computer programmers to create conversations as insightful as a Terry Gross interview? Or, should we build tools to help Terry Gross create compelling computer conversations.” [Terry Gross is a famous NPR Host] He went on to suggest that character and conversation in bots is a new model that draws from both the linear arts such as screen writing and stage production along with the interactive arts that we see in entertainment such as video gaming. His thesis is that expertise in the linear and interactive arts is hard to teach to developers so he is trying to make development more accessible to people with an artistic background.

Why AI is Back in the Spotlight?

Many people think of artificial intelligence (AI) as being new. Jordi Torras, CEO of Inbenta knows better. He was working with AI-based expert systems in the late 1980’s. Mr. Torras outlined the AI rollercoaster from the 1950’s to today that included multiple AI springs and winters (more on this from Andreessen Horowitz’s Frank Chen). His answer to why AI is back now and is more productive than ever: advances in deep learning. Neural networks today are no longer just responding to queries the way expert systems did in the past. They are identifying new knowledge and learning things that were never taught to them.

Many people think of artificial intelligence (AI) as being new. Jordi Torras, CEO of Inbenta knows better. He was working with AI-based expert systems in the late 1980’s. Mr. Torras outlined the AI rollercoaster from the 1950’s to today that included multiple AI springs and winters (more on this from Andreessen Horowitz’s Frank Chen). His answer to why AI is back now and is more productive than ever: advances in deep learning. Neural networks today are no longer just responding to queries the way expert systems did in the past. They are identifying new knowledge and learning things that were never taught to them.

Day 2 of the Virtual Assistant Summit will include presentations by ROSS Intelligence, Renault, NXROBO, BuddyGuard, Sense.ly and many others. The industry is moving fast and there are a lot of innovators working to make the technology better for users.

Cathy Pearl – The Secrets of Voice UX From A Practitioner’s Point of View

Voicebot Interviews Arte Merritt, Co-Founder and CEO of Dashbot.io